But clearly, this second app suffers far less than the first app. With the current way of measuring the throttling level, it will arrive at the same percentage: 80%.

It will then be completed in the fifth period. A task with 400ms processing time will run 80ms and then be throttled for 20ms in each of the first four enforcement periods of 100ms. Consider a second container application that has a CPU limit of 800m, as shown below. There is a significant bias with this measurement.

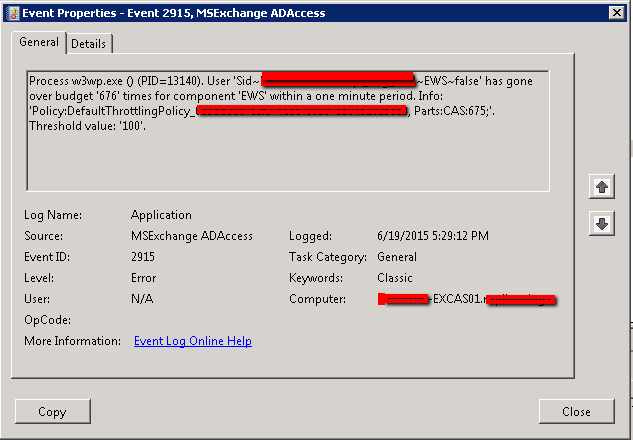

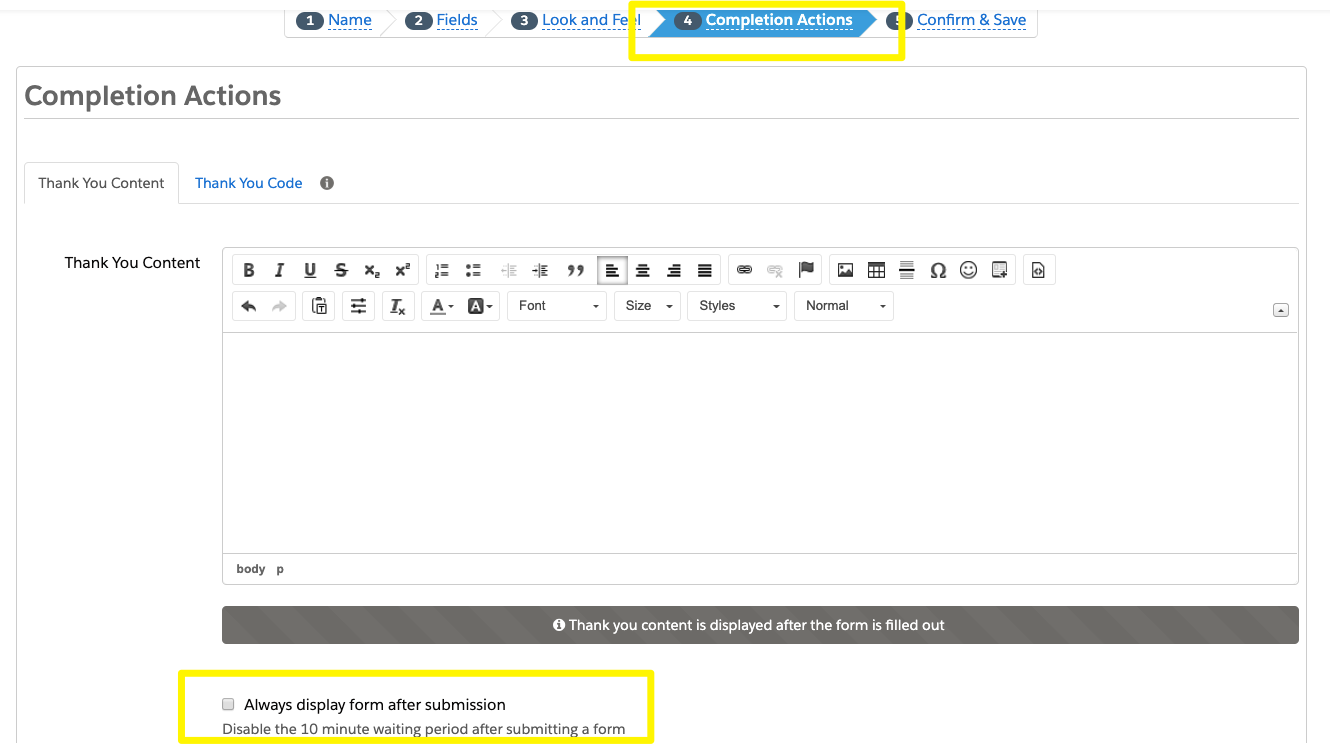

In the above example, that would be 4 / 5 = 80%. The old/biased way: Period-based throttling measurementĪs mentioned at the beginning, we used to measure the throttling level as the percentage of runnable periods that are throttled. Therefore, the total throttled time is 60ms * 4 = 240ms. In the fifth period, the request is completed, so it is no longer throttled.Ĭontainer_cpu_cfs_throttled_seconds_totalįor the first four periods, it runs for 40ms and is throttled for 60ms. It is throttled for only four out of the five runnable periods. Linux provides the following metrics related to throttling, which cAdvisor monitors and feeds to Kubernetes: Linux MetricĬontainer_cpu_cfs_throttled_periods_total Overall, the 200ms task takes 100 * 4 40 = 440ms to complete, more than twice the actual needed CPU time: This repeats four times for a 200ms task (like the one shown below) and finally gets completed in the fifth period without being throttled. That means that the app can only use 40ms of CPU time in each 100ms period before it is throttled for 60ms. The 400m limit in Linux is translated to a cgroup CPU quota of 40ms per 100ms, which is the default quota enforcement period in Linux that Kubernetes adopts. There it is a single-threaded container app with a CPU limit of 0.4 core (or 400m). If you watch this demo video, you can see a similar illustration of throttling.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed